ALBERT WU

> studying cs + ds @ UW-Madison

research

A compilation of the research I have done.

N. Roberts, A. Wu, H. Brian, and F. Sala, “ProD SAFe: Programmatic Distillation for Safe and Assured Foundation Models & Robots”, supported by a DARPA ARC grant and in preparation for NeurIPS 2026, Fall 2025 - Present

We are developing a novel framework for large-scale distillation of various foundation models into verifiable code for robotic tasks. Our goal is to advance the state of the art in both program synthesis and LLM verification. I am in charge of the implementation and experimentation of our proposed framework in various simulator environments, particularly how to improve the quality of the programs through formal verification and weak supervision.

N. Roberts, J. Cho, Z. Gao, A. Wu, K. Buchanan, and F. Sala, “Compute Optimal Test-Time Scaling of Skills: Knowledge vs Reasoning”, submitted to COLM 2026, Fall 2025 - Present

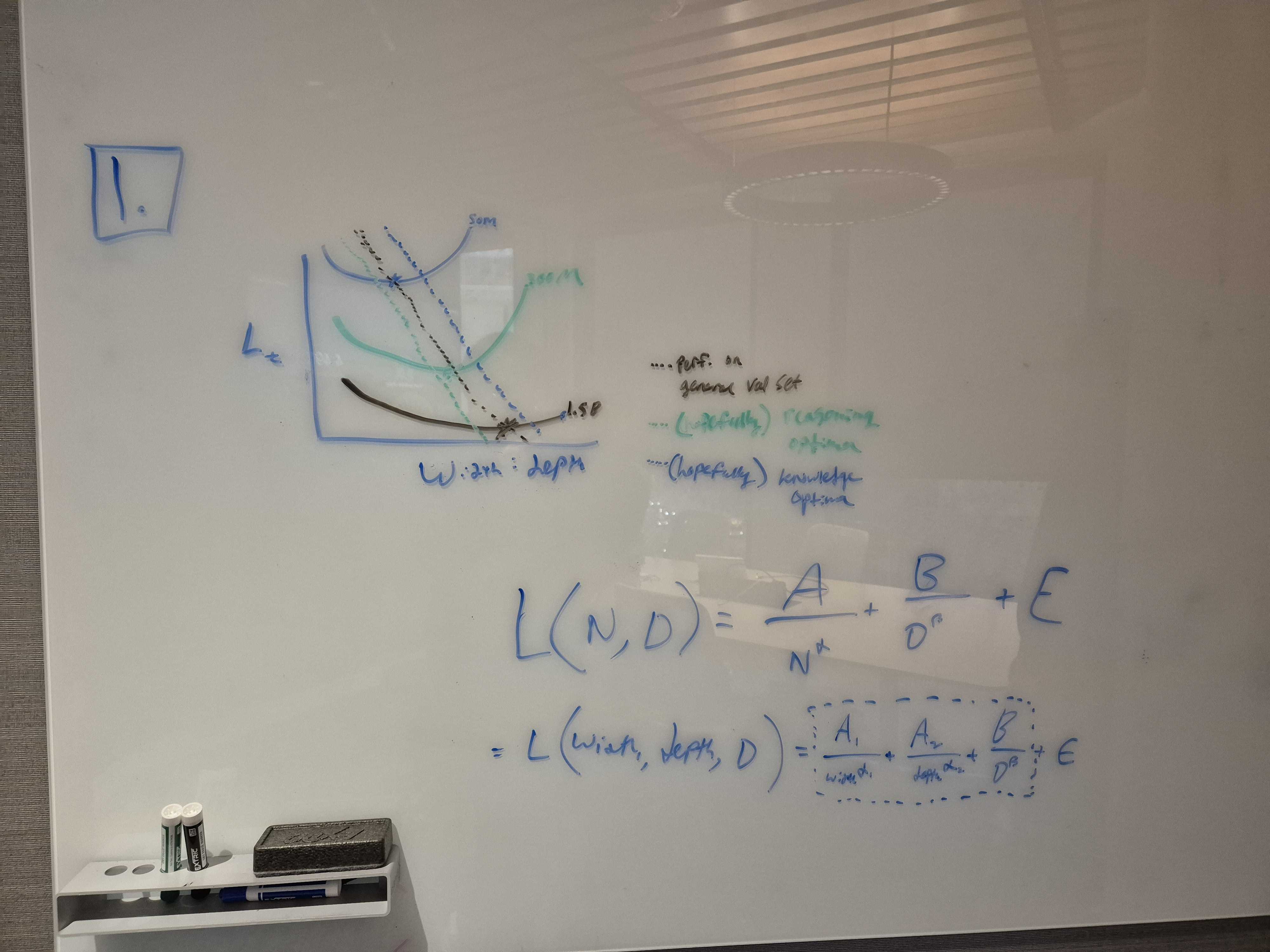

We are incorporating test-time scaling as a third power law in the Chinchilla Scaling Laws and investigating whether a fixed compute budget is better spent on training depth or test-time compute, particularly with respect to knowledge versus reasoning tasks. Our findings aim to provide valuable insights for people interested in training optimal in-house models under budget constraints. I am primarily responsible for developing a rigorous evaluation methodology and running experiments on proprietary Cerebras checkpoints.

D. Adila, C. Shin, A. Wu, N. Roberts and F. Sala, “repE: A Novel Benchmark for Evaluating the Reusability of Reasoning Models”, Spring 2025

We developed a benchmark to evaluate how effectively reasoning models can reuse previously generated thinking traces and outputs in response to earlier user prompts, particularly those not included in their pre-training data. This benchmark serves as a novel measure of the quality of a model’s reasoning process and its reasoning potential. I was primarily responsible for implementing a synthetic data generator and verifier for symbolic differentiation tasks, using dynamic programming with path recovery.